At the Nvidia GTC 2026 keynote, leather-clad CEO, Jen-Hsun Huang introduced an almost entirely context-free sizzle reel for its new DLSS 5 technology, coming to GPUs near you this Fall.

And the reaction has been… probably not what Nvidia expected. In some quarters it’s seen as transformative, in others it’s seen as transformative. On the one hand it has the potential to deliver a new level of photorealism into PC games this year, but on the other it has the more obvious potential to homogenise game graphics, and most especially characters, way beyond developers and artists’ original intentions.

Exhibit A: Grace Ashcroft.

The discourse, on the whole, has certainly not been positive. And we certainly have opinions…

Ah, what a mess. While I’m not completely opposed to the idea of generative AI being injected into my games in new and interesting ways, I couldn’t help but react in horror as to what Nvidia’s new tech can do to character faces.

I’m sure this is a personal taste thing, but the fact that Grace Ashcroft appears to have been Instagram-filtered into a completely different person is genuinely worrying. Nvidia seems keen to point out that developers will have control over just how far the AI will go in terms of sprucing things up, but turning the tech up to 11 from the get-go was always going to provoke an outcry.

Not to mention the AI beauty standards angle. Plumped lips, heavy eye makeup, a chiselled “I’ve just had expensive surgery” jawline. It all feels a little… well, gross, if I’m honest. I do wonder what the original character artists think about what’s been done to their carefully-crafted models, and whether they feel it’s an improvement.

And then there’s the scene lighting overall. Everything seems to have been hit with a hefty dose of the contrast stick, with what looks suspiciously like our old friend bloom making an overt appearance. Again, personal taste will factor in here—but while some areas look to be much improved, others seem to have been tweaked by a teenager experimenting with Photoshop sliders.

It’s not pleasant imagery to my eyes, and that was before I saw it in motion. Combine the plasticine, overly-shiny AI faces with a moving character model, and my own un-AI-ed visage starts to screw up in YouTube thumbnail-friendly fashion. I’m sure the technology will improve with time, but there’s a horribly uncanny, morphing, shifting effect to the video we’ve been shown to date. Distracting? Yeah, something along those lines.

If the demos had been tweaked to deliver more subtle results, I think most of us would be more curious about the tech. Unfortunately, hitting games with a massive dose of AI vaseline and dubious character cosmetic surgery has provoked quite the negative response. And I, for one, cannot help but agree. It’s gaming, Jim, but not as we know it—and a major misstep in terms of its presentation.

Nvidia’s botched DLSS 5 reveal is undoubtedly obscuring what could be a revolutionary shift in game rendering. That ray-tracing and path-tracing are computationally intensive is very well known. But what if you could essentially insert that kind of realistic lighting into a game without the need to brute-force all those light-bouncing calculations and instead do it with AI? That’s essentially the idea behind DLSS 5.

For now, it’s really just an idea. Nvidia’s DLSS 5 demo actually required a second RTX 5090 GPU running in parallel to support what is clearly a very hefty AI model. But it’s early doors for DLSS 5 and if Nvidia can squeeze the model down into something that can run on mainstream GPUs, it could be genuinely revolutionary.

In the meantime, all of that is being lost thanks to some, at best, heavy handed AI filters being applied to game character models, presumably because Nvidia wanted to insert some superficial visual impact, to make DLSS 5 look obviously different. That was a very bad call. But let’s be clear, those AI filters are not what’s potentially most interesting and important about DLSS 5.

You could argue Nvidia’s yassification of games is pretty much the same as modders trying to make Skyrim NPCs look like AI-generated porn—I’ve already seen this argument so many times—but you’d be wrong. This ignores the huge influence Nvidia has over gaming. Modding is still comparatively niche, and mods that so dramatically reinterpret a dev’s vision even more so. When Nvidia pulls shit like this, it has a gargantuan impact. This is one of the biggest, most important companies in gaming saying “Hey, games should look like this”, and what “this” is is a fucking nightmare. Uncanny, creepy and unnecessary, completely circumventing the artistic vision behind a game. It’s the homogenisation of videogame art, and that sucks.

Like so much AI-generated slop, it just looks terrible. Like some Twitter incel ‘shopping a character to make them look hotter, this soulless AI filter just ends up making characters look like dead-eyed sex dolls or rubber-faced mannequins relegated to the darkest corners of Tussauds. Anyone who thinks Nvidia’s demonstration enhances realism (ignoring the fact that most games are not attempting realism) needs to go outside for a minute and look at some real humans. Because this ain’t it.

Any studio executive or publisher who approves of this mess has completely sold out and shouldn’t be making games. They should just quit and join one of countless companies doing the AI grift—they’re always looking for ethically stunted evangelists.

I’ve got to second Fraser, here—not only do I think this AI filter makes every single game look like it’s been bound up in glossy, tasteless saran wrap, I also think it completely defeats the point in the first place. One thing AI bros don’t get about art is that it’s immensely complicated to make, especially at the kind of scale required for videogames.

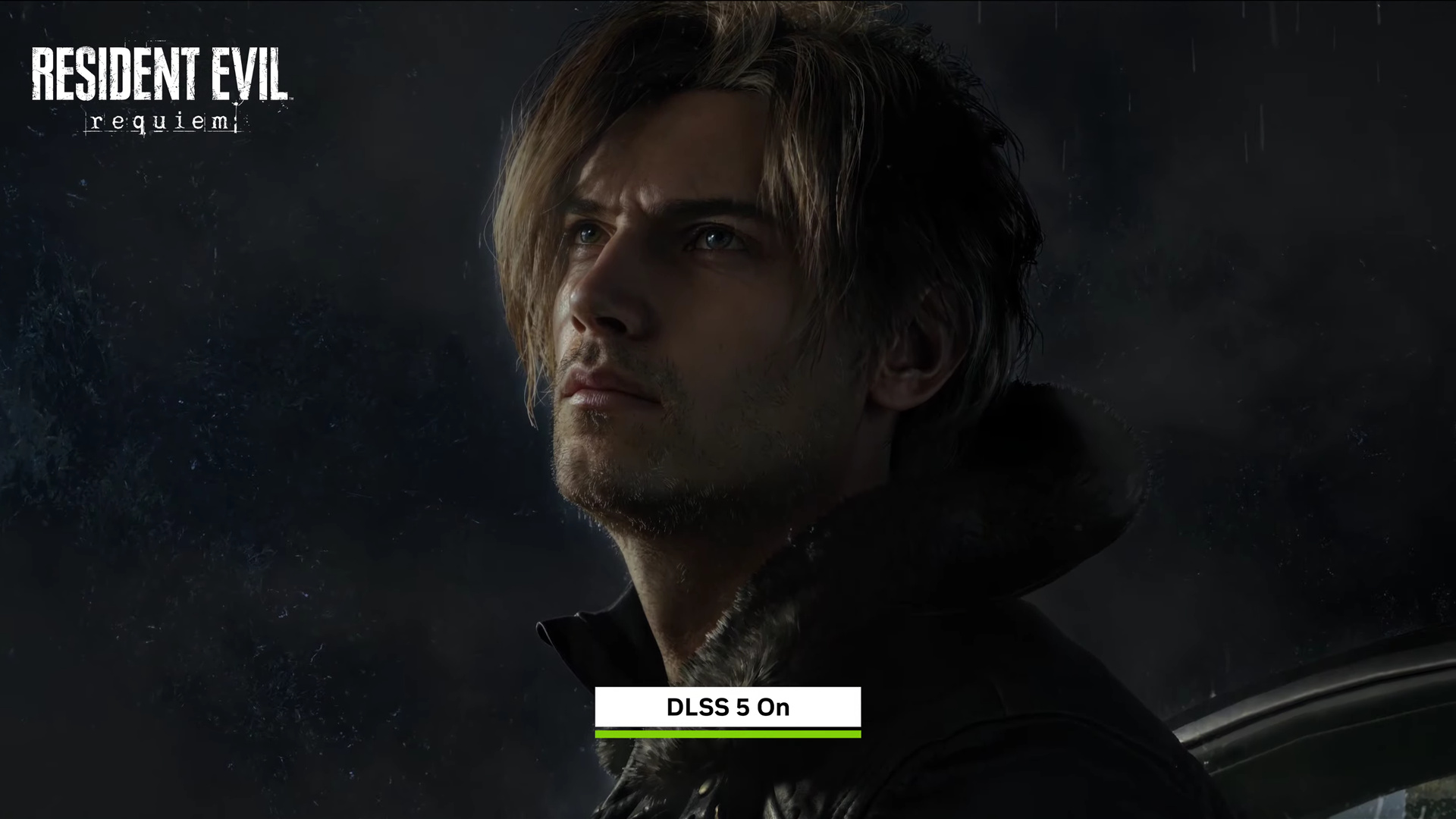

Take Resident Evil: Requiem, for example. Lighting has always been an art in horror, even when bound by technical limitations—sometimes enhanced by them, as was the case with the iconic, conveniently draw-distance reducing fog in Silent Hill 2. And it’s clear some very talented people have taken great care to produce a specific brand of gloominess in Requiem.

Watching Nvidia puff out its chest to inspirational music as a slow wipe takes that careful work out back and shoots it dead is so typically devoid of understanding or taste that it hits me as parody. You have to really (and I mean, really) not be paying attention to anything you’re watching or looking at to go “yep, this is great.”

See Leon stepping out of the car, his face cast in dark, oppressive shadow. Then watch the Nvidia slop filter make him glow like he’s in Moulin Rouge! Isn’t this better? Don’t you like this? Look, it’s got more graphics in it.

You could make the argument that this sort of thing might be welcome in games that, say, aren’t as “artistically dense”. I’m aware that certain sports games get mechanically produced and shot out of their overlords’ great machine minds on a yearly basis, so maybe this kind of tripe’s more welcome there.

Humbug, I say. Not only is that defeatist, and also harsh on the doubtless time-starved people trying to make those games look presentable on a wicked schedule, but it’s also no great excuse. We’re better than this, surely. If a game looks bad, I don’t think it’s worth whacking poor Grace Ashcroft with the dystopic Instagram filter (let alone the other ethical concerns with AI) just to let Starfield get away with looking a bit gray and uninteresting.

I also don’t find the idea that this sort of thing might be good in the hands of developers all that convincing, because here’s the thing: Like a lot of AI grist, these tools are doing things that developers are, writ large, already very good at. They’ve been inventing their way past technical limitations for decades with design, not an amalgam of stolen work pumped into a grey goo filter.

I’m kinda frustrated, a bit disappointed, but not entirely surprised at what the reaction has been around the DLSS 5 reveal last night. AI Grace is the thread that’s running through a huge number of the complaints around the technology showcase. And if that’s your first experience of what DLSS 5 is doing, I can completely understand the kneejerk antipathy that is prevalent. Capcom has got too excited with DLSS 5, turned all the AI controls up as far as they’ll go and let the model go to town on what it thinks Ms. Ashcroft ought to look like.

And it’s not good, feels entirely like any other AI filter, and leaves you feeling a bit icky.

I also get it if you are wholeheartedly against artificial intelligence in any of its myriad forms and want it kept as far away from your favourite hobby as possible.

But I find it disappointing, but again not surprising, there’s no nuance to the discussion. I’ve been shut down within the PC Gamer team for expressing any feelings of positivity around this nascent technology, but Resident Evil: Requiem isn’t the only game presented here, we still don’t know a ton about how it’s used by devs, and you can already see within the other titles that were shown off where they have been more measured in their use of the tech.

It’s a day after the event, and I’m still processing what I saw, what we’ve been told, and now I really want to get my hands on it and talk to some of the people behind it before damning the whole enterprise to development hell. There are things in that presentation, after all, which I believe do look good. The environmental lighting on Assassin’s Creed Shadows, and the effectiveness of the implementation in the Zorah tech demo, all speak to the different levels of usage that will be in the hands of the devs.

Right now, I understand the fear, but don’t buy the death of artistic expression argument, and until I’m shown that it is in fact just a binary on/off switch (which I don’t believe it is), and that developers are going to be forced to use it, then I’m not going to be convinced that this will become something that will destroy artistry in games development.

The invention of the camera was demonised by painters, and yet artistic expression flourished after the first shutter went click.

Developers specifically chasing a certain art style will still do that, may just use DLSS 5 for the lighting, or for materials, or will probably eschew it altogether. Which will be a choice, as it will be for PC gamers. And I kinda like choice.

But there are other issues, exemplified by Capcom’s heavy handed usage. Devs are going to have to take some responsibility to avoid a period of complete homogeneity, as we had when they all got excited about bloom and rim lighting.

Then there are other issues, such as the performance. Right now it is seriously computationally intensive, and we do not live in a space where computation is affordable or even accessible. If you’re turning ray tracing off in today’s games, you’re going to be doing the same for DLSS 5. And then even calling it DLSS 5. This does not feel like what I understand to be super sampling.

Though maybe that’s the thing. I’m an old man who likes playing games, so maybe I just don’t understand technology or art.

Time to risk alienating the entire PC gaming community here—but there has to be someone willing to bring some balance to the force. Look, I hate AI too, especially AI images and videos. But not every DLSS 5 video and image here looks bad.

I get the reaction to the Resident Evil and Hogwarts Legacy demo, sure, but to my eyes, at least, what we’ve seen of Starfield, Fifa, Assassin’s Creed, and Oblivion Remastered looks great. This variation only adds credence to Nvidia’s claim that “game developers have full, detailed artistic control over DLSS 5’s effects to ensure they maintain their game’s unique aesthetic.”

Assuming this is true—and given the variation shown, there’s no reason to assume otherwise—the only bad decision here was to throw a couple of poor examples that are over-adjusted towards an unrealistic, glossy aesthetic, at the forefront. That doesn’t make for a good marketing recipe when combined with a public that’s been rightly primed to sniff out and protest AI slop that many companies seem to keep championing.

Now, as much as I enjoy the memes, I can see the case that the popular discussion is overlooking the things DLSS 5 does right, such as really making environmental lighting shine. However, I’d counter that the reason yassified Grace Ashcroft dominates the conversation is because of Nvidia’s tone-deaf marketing.

It is staggering to me that the way a company priding itself on keeping its finger to the pulse of tech would choose to showcase its snazzy new upscaling right out of the gate with gameplay footage that looks as though it’s been run through a wholly unsubtle TikTok beauty filter—and don’t even get me started on the fact the live demos at GTC currently take two GPUs to do it.

I can understand wanting to pull out all the stops to ensure the differences between DLSS 5 on and off are obvious to even a lay audience, but I think it’s safe to say that tactic has backfired for Nvidia. Here’s hoping DLSS 5 can make a more thoughtful, better implemented second impression later this year.

There’s a moment in Digital Foundry’s video hands-on with DLSS 5 that, to me, communicates how thoroughly we’ve lost the plot somewhere along the way: The tech is toggled off and on while interacting with a wood elf in Oblivion Remastered, which produces the effect of watching as a character is suddenly wracked into a sleep paralysis demon garbed in the ill-fitting skin of a human man, each added wrinkle and manufactured plane traced with a searing, lacerating sharpness.

“We’ve never seen elves look this realistic,” the commentary says. “It’s crazy.”

And I just want us to take a moment and ask ourselves: Is a photorealistic elf actually a better one? Are we more interested in videogames as art, or as tech demos?

I’m posing the question because these early looks at DLSS 5, despite Nvidia’s insistence to the contrary, demonstrate a fundamental contempt for artistic intent. Nvidia’s wood elf yassification might produce an image with arguably higher visual fidelity, but it’s completely changed the scene in the process. The lighting isn’t improved. It’s different. Color tones and intensities have been warped; characters have been retrofitted with entirely different affects; the basic sense of atmosphere has been reshaped according to the arbitrary priorities and intrinsic biases of the technology.

When it’s intimated that this is all worth it if it’s in service of photorealism, I feel compelled to note that there’s a lot of dogshit photography in the world. Higher fidelity is fine, but if it’s overwriting the artistry behind the image, it’s not worth the cost.