We’ve been at the gates of the AI era for some time, but it’s during the last few months that we’ve seen some real movement towards an AI-centric web, primarily thanks to OpenClaw. The question is how other sectors of the web than the OpenClaw-ers ‘AI bros’ will respond. On that front, Cloudflare has thrown its hat into the ring of predicting an agent-neutral web.

That’s according to one of its recent blog posts where the behemoth cloud service provider outlines not just why this is the case but also what can and should be done about it. The key solution, according to Cloudflare, is to prove “behavior without proving identity”, which should be done in a more active way than the usual server fingerprinting.

The problem with the current web is that it wasn’t really designed to verify legitimate and acceptable connections in a landscape of widespread AI. And the solution can’t be to ban all AI because, as far as the server is concerned, “the distinction between bots and humans is moot.”

“There is no meaningful difference between the AI assistant booking concert tickets and the human who would have done so manually. Both are distributed. Both need anonymity. In each case, an origin would want to create less friction for users who wish to use the service as intended, rather than abuse it.”

However, what is a problem is what the client—whether human or AI—is using the website data for. That’s in part because server capacity is only worth allocating to connections that, on the whole, will offset the cost for that server, for instance due to advertising:

“Website owners cannot tell if their fetched content is serving one private report (possibly distorted, possibly unattributed) or being ingested to train a model for a million users, which disrupts the predictable (and monetizable) traffic that keeps their sites online.”

If there isn’t a way around this, Cloudflare predicts a shift towards a web that’s more restrictive and expensive for end-users:

“We can expect sites to pivot: requiring an account to see any content, or tying access to a stable identifier. This means no more ad-supported login-free articles, no more ‘three free articles a month.’ Other content businesses may move away from the Web completely, offering their data and services directly to AI vendors for a fee, or within walled gardens operated by large platforms.”

The solution, according to Cloudflare, is to move towards some kind of active validation on the client side that still retains privacy: “Instead of collecting passive signals, the server can ask the client for an active privacy-preserving signal.”

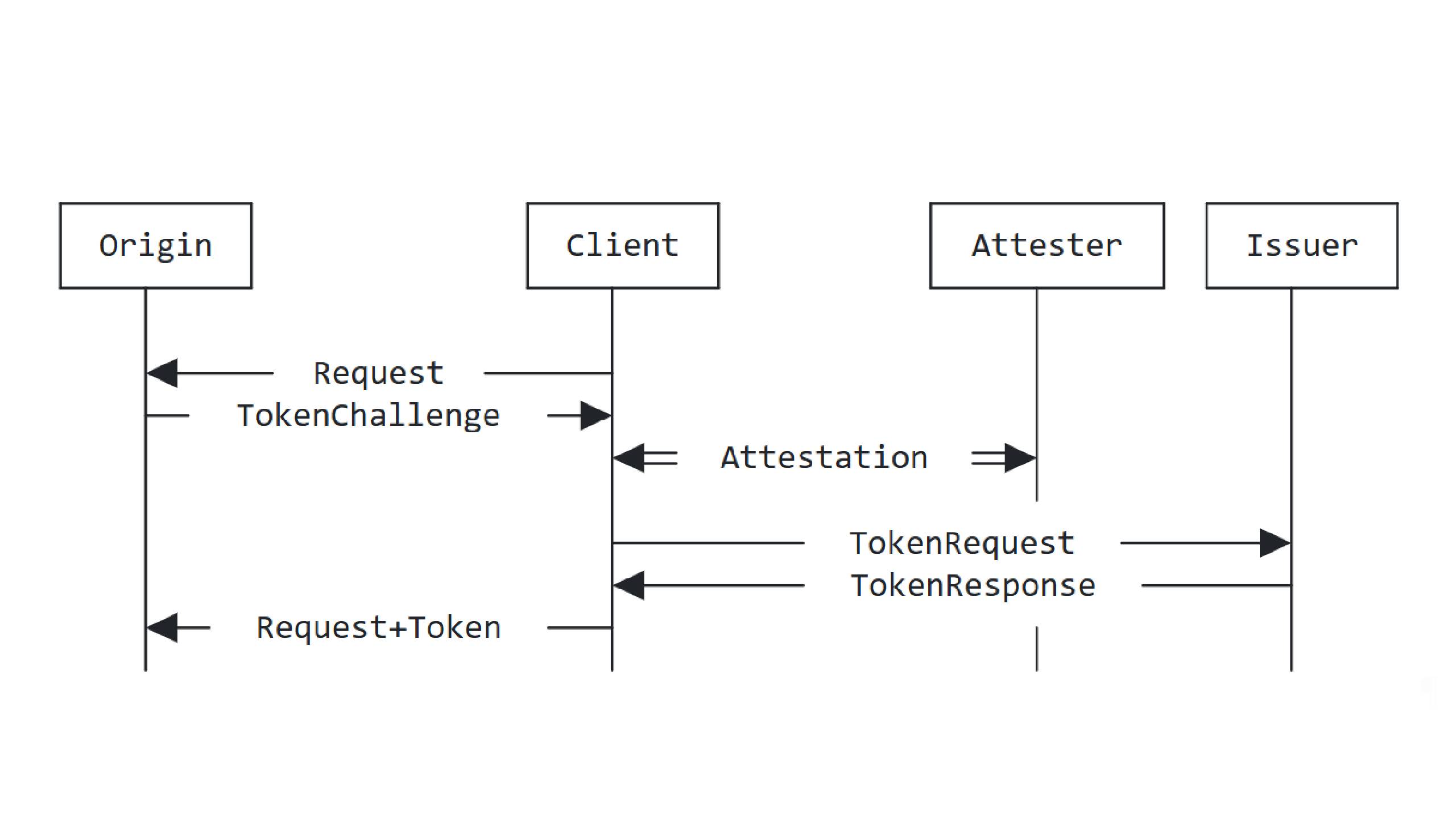

For instance, the company supports Privacy Pass, a protocol and extension that essentially allows you to prove you passed a check without having the website or server know anything about you other than that you’re verified. This is unlike cookies, the usual way for a website to verify proofs (such as CAPTCHAs), which track you between sessions. And the way it’s designed mean even whatever issues the Privacy Pass verification token to you does so blindly, without linking it to you.

As always, though, things aren’t black and white: “Once the infrastructure exists to verify anonymous proofs, what gets proven can expand.”

In other words, there’s a risk such technology could develop in a direction that enforces strict requirements on users to receive verification, such as requiring a Google account, for instance.

To combat this, Cloudflare thinks we should consider a litmus test for whether a technology is acceptable or not: “do the methods allow anyone, from anywhere in the world, to build their own device, their own browser, use any operating system, and get access to the Web. If that property cannot hold, if device attestation from specific manufacturers becomes the only viable signal, we should stop.”

To my eyes, at least, I’m seeing a lot of parallels between this and the problems surrounding broader age verification and liveness verification checks. It seems the best solution will lie in the development of technologies and protocols that are open and decentralised, blind or zero-knowledge, limited, and privacy-preserving. It’s just a question of whether the world will wait for those technologies to be developed and implemented, or march forwards into more problematic solutions.