Just because you can, it doesn’t mean you should. Wise words and, dare I say, highly relevant in the context of the pursuit of ever more realistic VR experiences. To wit, would you really want to include accurate odours?

Hold that thought, because we haven’t addressed the “can you” bit. It turns out a group of researchers think they can, courtesy of a new approach that involves using ultrasound to stimulate the scent-processing part of the brain. Indeed, they claim the technology can be used not just for smell but also as a highly efficient method for inputting data into the brain. Yes, really.

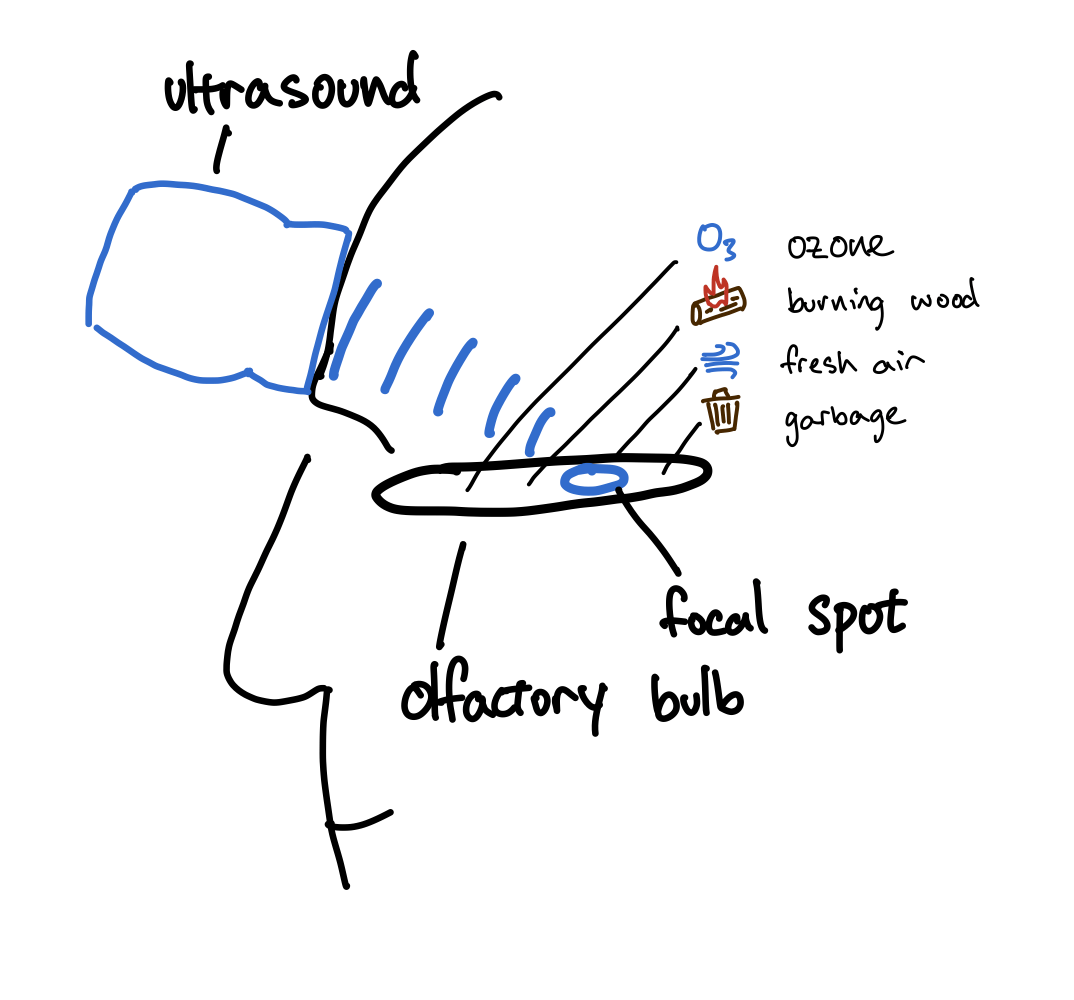

“We pointed an ultrasound probe at the scent-processing region of the brain to obtain different sensations. Different focal spots corresponded to different smells, which we’ve replicated first-try on two people and validated with a blind trial,” the researchers say via this blogpost.

The mere suggestion of such a technology has been enough, inevitably, to have some observers recoil. One commenter on X suggested a possible consequent scenario of, “walking into a VR chat lobby and immediately projectile vomiting as your headset psychically projects the scent of yeast-infected rotting fish from the pregnant fox OC on the other side of the room while it tanks your framerate.” Charming.

Walking into a VR chat lobby and immediately projectile vomiting as your headset psychically projects the scent of yeast-infected rotting fish from the pregnant fox OC on the other side of the room while it tanks your framerate. April 21, 2026

Specifically, this technology claims to work by stimulating the olfactory bulb. The location of the olfactory bulb makes stimulation with ultrasound particularly tricky, the team says, but not impossible.

“We found that you can place the transducer on the forehead and aim the ultrasound downward towards the olfactory bulb. While this isn’t a perfect solution because the frontal sinuses can weaken the signal, careful device positioning above the sinuses still allows us to reach our general target region.”

Fine tuning included using an MRI scan of the subject’s skull to optimise the positioning of the ultrasound pad, and then various parameters addressing the actual ultrasound being directed at the olfactory bulb.

That includes the frequency, focal depth and using pulsed output. Thus far, replicated smells include “fresh air, with a lot of oxygen,” “the smell of garbage, like few-day-old fruit peels,” and “a campfire smell of burning wood.”

Anywho, it seems that areas of olfactory bulb are associated with particular smells and are quite densely packed. “The distance between freshness and burning was ~3.5 mm,” the researchers say.

Exactly how serious all this is remains hard to gauge. There’s clearly an element of whimsy in the diagrams created by the team, for instance. Moreover, I’m not sure what to make of the claim that this simulated smell technology can be used as an interface for injecting data into the brain.

Maybe I should let the research team explain this bit:

“The nose has 400 distinct receptor types, and we can distinguish subtle combinations of their activations, so they could serve as a channel of writing directly into the brain, as a means of non-invasive neuromodulation.

“The olfactory system potentially allows writing up to 400, if not 800 due to two nostrils, dimensions into the brain. That is comparable to the dimensionality of latent spaces of LLMs, which implies you could reasonably encode the meaning of a paragraph into a 400-dimensional vector.

“If you had a device which allows for this kind of writing, you could learn to associate the input patterns with their corresponding meanings. After that, you could directly smell the latent space. A bit of ultrasound, a breath in – and you understood a paragraph.

“People are able to develop synesthesia – being able to hear colors and see smells, and it might be possible to extend that to semantics. However, at this stage it is speculative.”

To all this you can also add other questionable pursuits of VR fidelity, including an electronic tongue that can simulate, among other things, fish soup, and another device that zaps your neck and can induce feelings of motion. I’ll let you decide whether any of this is a good idea.